For most of 2023, the enterprise AI conversation was dominated by proprietary models from large technology companies. GPT-4, Claude, and similar commercial offerings delivered impressive performance, and enterprises were willing to pay premium API prices to access them. But as 2024 progressed, a significant shift began. Open-Source LLM Development matured rapidly, and LLaMA Model Implementation in particular emerged as a genuinely competitive alternative for a wide range of enterprise applications.

The Case for Open-Source

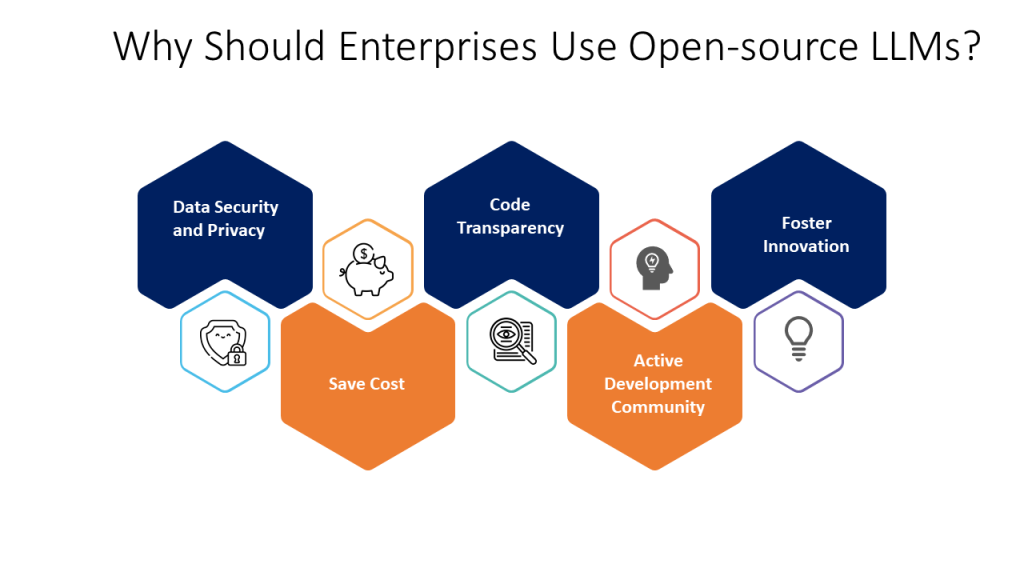

Open-Source LLM Development offers enterprises something that proprietary APIs fundamentally cannot: control. When you run an open-source model, you control the infrastructure, the data flow, the training process, and the deployment environment. For organisations with strict data privacy requirements, regulatory obligations, or competitive sensitivity around their AI capabilities, this control is enormously valuable.

Beyond control, Open-Source LLM Development offers cost economics that improve dramatically at scale. Proprietary model APIs price per token, which can become prohibitively expensive for high-volume applications. Running an open-source model on owned or leased GPU infrastructure converts variable costs to fixed costs — a significant advantage for organisations with predictable, high-volume inference needs.

LLaMA Model Implementation: Why It Leads the Open-Source Field

Meta’s LLaMA family of models has become the de facto standard for enterprise Open-Source LLM Development. LLaMA Model Implementation has been successful across a remarkable range of use cases — from customer service automation to code generation to document analysis — demonstrating that open-source performance has reached parity with commercial offerings for many enterprise applications.

The LLaMA ecosystem is rich with tooling, community support, and derivative models (like Code LLaMA and fine-tuned variants) that address specific enterprise needs. This ecosystem makes LLaMA Model Implementation faster and more reliable than building on less-established open-source alternatives.

Fine-Tuning for Domain Specificity

One of the greatest advantages of Open-Source LLM Development is the ability to fine-tune models on proprietary data. This domain adaptation is what separates a generic language model from a genuinely useful enterprise tool. A LLaMA Model Implementation fine-tuned on an organisation’s internal documents, product data, customer interaction history, and domain-specific terminology will dramatically outperform a generic model for that organisation’s specific use cases.

Considerations and Challenges

Open-Source LLM Development is not without challenges. Running and fine-tuning large models requires significant GPU infrastructure and ML engineering expertise. Organisations considering LLaMA Model Implementation without an experienced partner may find themselves facing unexpected complexity in model serving, optimisation, and ongoing maintenance.

Conclusion

Open-Source LLM Development and LLaMA Model Implementation represent a genuinely compelling alternative to proprietary AI APIs for enterprise deployments. With the right implementation partner and infrastructure, organisations can achieve comparable performance with greater control, better economics, and a foundation for continuous capability development.